Every successful drug trial is alike; each unsuccessful drug trial fails in its own way. Ok, maybe that’s a bit of a stretch—the whole Tolstoy thing is a tad bit forced—but there’s a kernel truth to it. To wit, the path to demonstrating that a drug has the desired effect is generally straightforward, while there are myriad ways to obfuscate the fact that it doesn’t. Anavex Life Sciences recently announced results for a Phase 2b/3 trial which by all appearances failed its primary endpoints, but you certainly wouldn’t know it based on the company’s ensuing press release. This has created quite a bit of confusion, which I’d argue is probably exactly the point. I’m going to do what I can to cut through that confusion and explain why, based on the data that the company has presented, I believe that they have erroneously reported that the trial met its primary endpoint when in fact it came up well short of statistical significance.

Let’s start by looking at the trial design, which identifies two co-primary endpoints. For some background in how to assess a trial with multiple primary endpoints, we can read through the relevant FDA guidance document. The main focus of the document is about controlling for Type I error, or false positives. In a sense, that’s the FDA’s prime directive: approve drugs that have demonstrated a statistically significant treatment effect by reducing the likelihood of making a Type I error to an acceptable level. It’s up to the sponsor to control for Type II error (false negatives), though there is a bit of guidance for that as well. Controlling for Type I error isn’t everything—effect size and safety considerations are important as well—but it’s an essential guideline for the FDA in making decisions about drug approval.

Multiple primary endpoints give you multiple “shots on goal,” which is a classic example of the multiple comparisons problem. Basically, the FDA wants to approve drugs that look like Lionel Messi or Kylian Mbappé—demonstrably effective and highly likely to score on any good quality shot. By comparison, you could pull the shirtless guy on his fifth beer out of the stands and give him a hundred chances to score a penalty, and he’d almost certainly put one in eventually. If we only get to see his lone goal on SportsCenter and not the other ninety-nine tries, should we believe this mystery fan deserves a spot on the French or Argentine national team? I think we can agree that the answer is a resounding “no,” and this logic holds true no matter the number of tries, whether two or two hundred—we always have to adjust our evaluation based on how many shots on goal someone plans to take. The FDA guidelines cover this in detail, and though there are dozens of ways to make this correction, for two endpoints it’s pretty much always going to require halving the typical threshold for statistical significance, give or take. So, given the standard p < 0.05 threshold for statistical significance, that means that statistical significance on one endpoint requires p < 0.025; if meeting both endpoints was a requirement of the trial, then each would need p < 0.05 in order to claim a statistically significant result.

Calculating the Trial’s P-Value

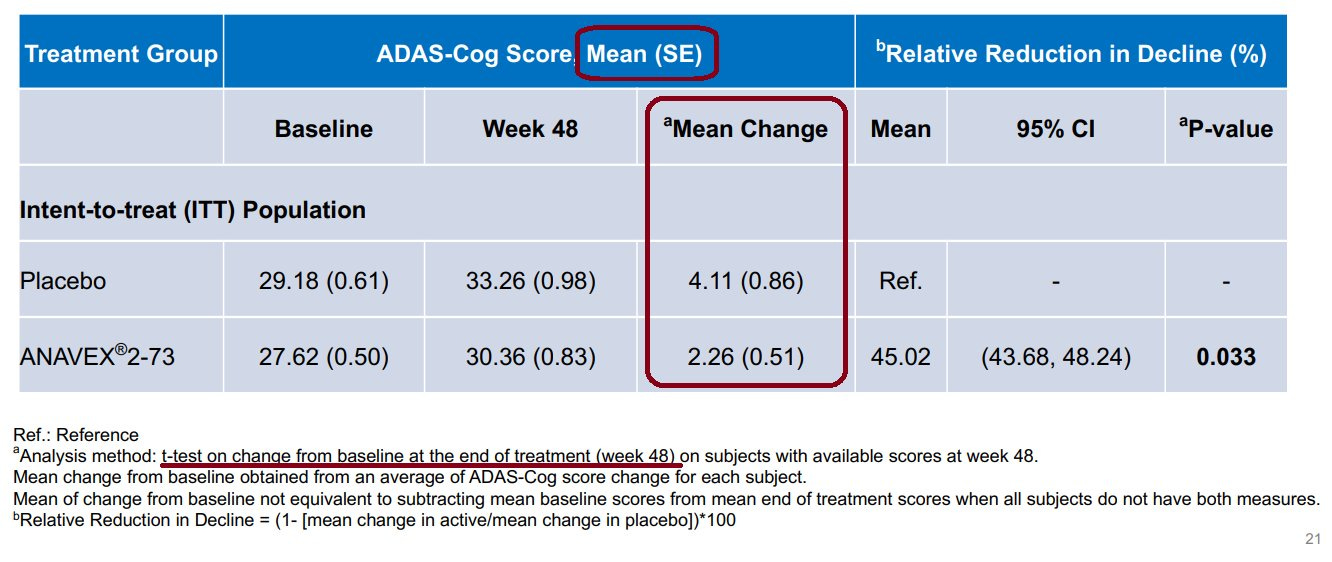

Taking a look at Anavex’s presentation deck, we see that only the ADAS-Cog primary endpoint was reported. They do a sort of half-hearted responder analysis for both endpoints, but the stated primary endpoints do not include responder analysis, so those don’t really apply. Here is the slide with the numbers that Anavex reported for their overall ADAS-Cog endpoint, with red annotations for the numbers and test details that are pertinent:

There are a few things note about the slide. First, Anavex informed us that some patients did not have scores at either the start or finish of the trial (I assume just the finish, because why would they include a patient with no score at the start?) and so these patients were dropped from the mean change calculation, but apparently not from the reported baseline and 48-week averages (making these useless for us). They did not disclose how many patients were dropped, which is a red flag, but that’s fine for our purposes—we can set a baseline by using the full population size as an upper bound on statistical significance since it will pretty much uniformly get worse the more patients we drop. The second thing to note is that they reported standard error instead of standard deviation, which just means we have to do a little extra work (but it’s quite tricky of them to make things look a lot better at a glance). The last is that they used a t-test to produce their reported p-value of p = 0.033, which is expected, but they did not specify whether the t-test was one-tailed or two-tailed. Let’s try the standard two-tailed t-test for ourselves and see what we get:

The verdict of our t-test is p = 0.065, well short of the required p < 0.025. Contrary to what Anavex CEO Christopher Missling said in an interview about the trial results—that “the correct calculation is each individual patient”—the summary metrics of the distribution (mean, standard deviation, and population size) are all we need in order to perform a t-test. It’s unclear what exactly Missling meant, though he may be confusing the t-test analysis with a paired difference test, which measures the change in a target metric of each subject before and after an intervention, pooled as a single group without a control population. A paired difference test would not be an appropriate evaluation of the trial data. It’s also still an open question how exactly Anavex arrived at a value of p = 0.033, but notice that our p-value is just about double the one quoted by Anavex, and keep in mind that the total population is a bit lower than what we used due to an unspecified number of dropouts. If we drop around 10% of patients in each arm we arrive at p = 0.066 and voila—that’s precisely double the reported value, suggesting that they may have inappropriately used a one-sided t-test to evaluate the trial results.

Trial by Distribution

It's worth spending some time thinking about how to properly treat the underlying data that defines the success of a trial like this one. The goal is to observe real, robust improvements on a somewhat subjective and noisy test (ADAS-Cog). That's not necessarily a knock on the test itself, but it's a reality of Alzheimer's disease that the outcome measure is an experiential assessment, and our evaluation of trial results must be informed by this. Further, Alzheimer's is characterized by a heterogeneous disease progression, which translates to a wide range of outcomes as a function of time for any given patient. What this all sums up to is the requirement that an intervention demonstrate a population-level treatment effect on a sample large enough to account for these confounding factors.

In practice, this means that there really isn't much value diving into the rabbit hole of convoluted and esoteric secondary statistical tests. Once we start dropping patients, arbitrarily drawing responder thresholds, appealing to less powerful measures like “percentage improved,” etc. we're giving up the ghost, so to speak. Poke around long enough in distributions this noisy and you're bound to find something that “looks promising.” If you want to believe it, that's your prerogative; the trial's job, however, is to demonstrate a significant difference in the distribution means using the full dataset—full stop. Leave the secondary measures to a trial that is sufficiently powered for them.

One could do a bit better using, say, Tukey HSD and ANOVA, which are slightly more generous with their p-value here (I calculated best-case p = 0.0504), but they still come up well short. And here's the thing—while these tests are a bit more rigorous, you're essentially stuck without an option to report a spurious one-tailed result. If I were perhaps a more cynical person, I'd entertain the possibility that the statisticians (and the leadership) at Anavex are well aware of this fact. Either way, company leadership and the “KOLs” that provide guidance—whether directly to the company as we saw during the vacuous follow-up conference call on Monday or via absurd stock promotion firms—are well aware of the issues in the trial analysis at this point. The response appears to be an effort to dig a large enough hole in the sand that everyone’s head can fit with room to spare. It’s one thing to spin an unsuccessful trial into a positive, but much like we saw with Anavex’s trial in Rett syndrome earlier this year and with the exploratory publication that preceded this trial, they appear perfectly comfortable with deceptive statistical analyses and selective data reporting. It’s a damn shame for the patients who are counting on them to be honest and forthcoming.

AVXL Slide#6

Mann-Whitney used in place of t-test, one and two tailed, for ADCS-ADL

ANAVEX LIFE SCIENCES

Presenting

Thursday, January 12, 2023 at 08:15 AM PST

the latest Conference (JPMorgan41) throughs light on why t-test was not used

Slide #6 reveals they used Mann-Whitney U test in place of t-test.

Assumptions and formal statement of hypotheses

Although Mann and Whitney[1] developed the Mann–Whitney U test under the assumption of continuous responses with the alternative hypothesis being that one distribution is stochastically greater than the other, there are many other ways to formulate the null and alternative hypotheses such that the Mann–Whitney U test will give a valid test.[2]

A very general formulation is to assume that:

All the observations from both groups are independent of each other,

The responses are at least ordinal (i.e., one can at least say, of any two observations, which is the greater),

Under the null hypothesis H0, the distributions of both populations are identical.[3]

The alternative hypothesis H1 is that the distributions are not identical.

Under the general formulation, the test is only consistent when the following occurs under H1:

The probability of an observation from population X exceeding an observation from population Y is different (larger, or smaller) than the probability of an observation from Y exceeding an observation from X; i.e., P(X > Y) ≠ P(Y > X) or P(X > Y) + 0.5 · P(X = Y) ≠ 0.5.

https://en.wikipedia.org/wiki/Mann–Whitney_U_test

slide 6.jpg

00:00 / 38:38